Serving Markdown version of pages to AI: Is it worth it?

Recently, Cloudflare released a new feature that enables websites to serve Markdown versions of pages to AI agents via content negotiation. But is this actually a good practice?

I applied a similar strategy on my personal website and did some research to better understand the advantages and disadvantages of making Markdown available through content negotiation.

Why consider markdown for agents?

Before going through the implementation process, there are a few reasons why this approach should, or should not, be considered.

Cloudflare states that some agents, such as Claude Code and OpenCode, send requests with headers like:

{ headers: { Accept: "text/markdown, text/html" } }This indicates that these agents can accept Markdown and may prioritize it if available.

However, this is not the case for many conventional crawlers such as Googlebot, and even OpenAI’s standard crawlers. I haven’t audited the exact Accept headers of every crawler or agent, but it’s generally understood that most agents still prioritize the browser version of the page, which is the full HTML.

If you explore Cloudflare Radar’s AI bot data, you can see how early and fragmented this ecosystem still is.

At this stage, most user agents do not appear to actively negotiate Markdown over HTML. In practice, the majority still consume full HTML documents.

Markdown for agents isn't an SEO practice

Where this becomes interesting is not SEO, but efficiency. With recent discussions around WebMCP, we’re starting to see a broader shift in how agents interact with web content. WebMCP itself is a separate concept, but it touches on a similar principle: reducing unnecessary overhead in machine-to-web interactions.

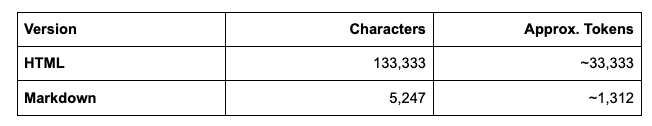

Processing full HTML documents is not particularly efficient for LLMs. In fact, it consumes significantly more tokens than serving a clean Markdown file.

The main argument in favor of Markdown here is computational efficiency:

1. Input Tokens: on average, 1 token corresponds to approximately 4 characters. An HTML page contains far more than just readable content. It includes markup, layout structure, scripts, styles, navigation, and metadata. All of that contributes to token usage.

2. Processing Complexity: a web page contains many more structural elements than the informational content itself. Even if an agent ultimately extracts only the meaningful text, it still has to process the entire document first. That introduces computational overhead.

3. Context Window Utilization: if you serve Markdown instead, you provide a cleaner and more direct representation of the content. More efficient use of the context window. By delivering only the content that matters, you potentially maximize the model’s reasoning capacity.

This discussion is primarily about content consumption efficiency, not about page interaction or search engine optimization. We’re not diving deeply into WebMCP here. Instead, it’s about offering a more efficient representation of the same content to agents that explicitly request Markdown instead of HTML.

At the moment, this remains experimental. Most agents still default to HTML. But as LLM web interactions evolve, exploring cleaner content delivery formats may become increasingly relevant; especially if the goal is to improve response quality through better context efficiency.

The Trade-offs of Content Negotiation

By implementing Markdown via content negotiation, I’m effectively providing a lower-token version of my website. But this doesn’t come without trade-offs.

The first change is architectural. With content negotiation in place, my blog posts are now rendered on demand by the server. The site is no longer fully pre-rendered as static HTML. That introduces additional complexity. For example, I can’t rely on the standard @astrojs/sitemap package without adjustments. What used to be straightforward static generation now requires more intentional configuration.

Caching also becomes more nuanced. I’m serving two representations from the same URL: HTML for browsers and traditional crawlers, and Markdown for agents that explicitly request it. Serving tailored content from a single URL isn’t unusual, it’s actually how content negotiation is supposed to work, but it does require careful handling to avoid cache inconsistencies.

It’s also important to clarify who this benefits.

Traditional crawlers are largely unaffected. Search engines still crawl links from page to page, download HTML, render the page much like a browser would, and evaluate structure, layout, performance, and discoverability. Their concern is whether the content can be indexed correctly and whether it reflects what a user sees.

AI agents operate differently. They are not trying to render a page visually. They ingest its content and process it through a language model. In that context, what matters most is the semantic content itself (the meaning of the text) and how efficiently it can be processed. Fewer tokens mean lower computational cost. Cleaner structure means less ambiguity. Markdown naturally aligns with those priorities because it removes structural noise and presents content in a more direct form.

The key difference, then, is this: search engines care about rendering and indexing. AI agents care about semantic extraction and efficiency. Understanding that distinction is essential before deciding whether introducing content negotiation is worth the added complexity.

Step-by-Step: How I Added a Markdown Version to My Astro + Sanity Website

I recently added a Markdown representation of my blog posts to my personal website (Astro + Sanity). I wanted to see what it looks like to offer a low-token version of the same content for clients that explicitly ask for Markdown via content negotiation.

This post is a walkthrough of exactly what I implemented, and the few trade-offs I ran into along the way.

Prerequisites (What My Setup Looked Like)

Before I changed anything, my site already had:

- A working Astro project connected to a Sanity.io backend

- Blog posts routed with Astro’s file-based routing (ex: /insights/[slug])

- A standard HTML blog page rendering Sanity content

If your setup is similar, you’ll be able to follow this pretty closely.

Step 1: Create the Markdown API Endpoint

The first thing I needed was a dedicated route that:

Fetches content from Sanity

Converts Portable Text to Markdown

Returns it with the correct Content-Type

For organization, I placed this inside a markdown directory.

File: src/pages/markdown/insights/[slug].md.ts

import type { APIRoute } from "astro";

import { loadQuery } from "../../../sanity/lib/load-query";

import { portableTextToMarkdown } from "@portabletext/markdown";

import type { SanityDocument } from "@sanity/client";

// This route will be rendered on-demand at request time.

export const prerender = false;

interface Post extends SanityDocument {

body: any;

}

export const GET: APIRoute = async ({ params }) => {

const { slug } = params;

try {

const { data: post } = await loadQuery<Post>({

query: `*[_type == "post" && slug.current == $slug][0]{ body }`,

params: { slug },

});

if (!post || !post.body) {

return new Response("Not found", { status: 404 });

}

const markdown = portableTextToMarkdown(post.body);

return new Response(markdown, {

status: 200,

headers: {

"Content-Type": "text/markdown; charset=utf-8",

},

});

} catch (error) {

return new Response("Internal Server Error", { status: 500 });

}

};This route always returns Markdown. It’s clean, explicit, and easy to test.

The important detail here is:

export const prerender = false;

That tells Astro this route must run dynamically at request time.

Step 2: Forcing Dynamic Rendering

At first, I ran into a ForbiddenRewrite error.

The reason? Astro middleware can only rewrite between routes that are both dynamically rendered. By default, Astro tries to pre-render pages as static HTML. So I was trying to rewrite from a dynamic request to a static file.

That doesn’t work.

The fix was simple — but not obvious at first.

I had to make my blog post page dynamic as well.

File: src/pages/insights/[slug].astro

---

import PortableText from "../../components/PortableText.astro";

import ReadMore from "../../components/ReadMore.astro";

import FAQSection from "../../components/FAQSection.astro";

import KeyTakeaways from "../../components/KeyTakeaways.astro";

export const prerender = false;

export async function getStaticPaths() {

const { data: posts } = await loadQuery({

query: `*[_type == "post"]`,

});

...

}

---Once prerender = false is added, Astro ignores getStaticPaths() for static generation and switches the page to on-demand rendering.

Now both:

- /insights/[slug]

- /markdown/insights/[slug].md

are dynamic.

That unlocks middleware rewriting.

Step 3: The Middleware

This is where everything comes together. I created a middleware file that intercepts requests and decides what to do based on:

- The URL

- The Accept header

File: src/middleware.ts

import { defineMiddleware } from 'astro:middleware';

export const onRequest = defineMiddleware((context, next) => {

const { url, request } = context;

const { pathname } = url;

// Rule 1: If URL ends with .md

if (pathname.startsWith('/insights/') && pathname.endsWith('.md')) {

const slug = pathname.replace('/insights/', '').replace('.md', '');

const newPath = `/markdown/insights/${slug}.md`;

return context.rewrite(newPath);

}

// Rule 2: If Accept header requests markdown

if (pathname.startsWith('/insights/') && !pathname.endsWith('.md')) {

const acceptHeader = request.headers.get('accept');

if (acceptHeader && acceptHeader.includes('text/markdown')) {

const slug = pathname.replace('/insights/', '');

const newPath = `/markdown/insights/${slug}.md`;

return context.rewrite(newPath);

}

}

return next();

});What this does:

- /insights/post.md → always rewritten to Markdown handler

- /insights/post + Accept: text/markdown → rewritten

- Everything else → continues as normal HTML

One canonical URL. Two formats. Clean separation.

Step 4: Testing Everything

I verified both behaviors just by adjusting the accept header (you can also add the specific UA):

# Ask for markdown

curl -L -H "Accept: text/markdown" http://localhost:4321/insights/your-post# Ask for HTML

curl -L -H "Accept: text/html" http://localhost:4321/insights/your-postFinal Considerations

Serving the raw Markdown version of the blog post instead of the full HTML results in a token reduction of approximately 96%. This represents a significant saving in both the computational cost and time required for an AI agent to process the page's core content.

- Approximate Tokens Saved: 32,021

- Reduction Percentage: ~96%

While there are significant computational gains from token savings, it's clear that Googlebot currently prefers to fetch and render a page just as a human user would. Although this standard may evolve, for now, I have instructed Googlebot via robots.txt not to access the raw Markdown versions.

# Default for all bots (except Googlebot)

User-agent: *

Disallow: /studio/

Sitemap: https://carolinescholles.com/sitemap-index.xml

Sitemap: https://carolinescholles.com/sitemap.md

# Specific rules for Googlebot

User-agent: Googlebot

Disallow: /studio/

Disallow: /insights/*.md$

Disallow: /markdown/

Disallow: /sitemap.md

Sitemap: https://carolinescholles.com/sitemap-index.xmlReferences

-

webMCP: Efficient AI-Native Client-Side Interaction for Agent-Ready Web Design

-

Markdown Routes with Next.js

-

Cloudflare just shipped HTML-to-markdown for AI agents. I built it from the content layer instead.

-

eal-time content conversion to Markdown at the source using content negotiation headers

-

What are tokens and how to count them?

How does serving Markdown improve AI efficiency?

Markdown significantly reduces token consumption—often by up to 80%—by stripping away HTML boilerplate like scripts, styles, and nested tags. This allows AI models to process the semantic content more accurately and fit more information into their context windows.

Is serving Markdown to bots considered a good SEO practice?

Currently, no. Search engines like Google prioritize the full HTML version to understand page layout and user experience. While it helps AI agents, Google representatives have expressed skepticism, noting that bots may struggle to parse links or structure correctly in Markdown compared to standard HTML.

Which AI tools actually use the Markdown 'Accept' header?

Modern developer-focused AI agents, such as Claude Code and OpenCode, are among the first to explicitly include 'text/markdown' in their headers. Most general-purpose crawlers, like GPTBot, still default to traditional HTML for the time being.

What are the main technical challenges of implementing this?

The primary trade-off is architectural complexity. Sites often have to switch from static pre-rendering to dynamic, on-demand rendering. Additionally, developers must carefully manage caching using the 'Vary: accept' header to ensure browsers don't accidentally receive Markdown intended for bots.

- → Why My Blog Was Down For Weeks (And The Netlify DNS Mistake You Should Avoid)

- → Building a Multi-Agent System to Find My Next Neighborhood Abroad

- → Notion Blog with AI Translation

- → A new approach to Information Architecture in the age of AI?

- → Help Google Find Your Pages

- → Observability: How I Monitor My Strava Activities